|

I am a PhD student in the Robotics Institute at Carnegie Mellon University advised by Professor George Kantor Carnegie Mellon University in the Kantor Lab. I received my B.S. in ECE from Cornell University and my Masters in Robotics at CMU, where I worked on computer vision, 3D reconstruction, and next-best-view planning to phenotype small crops in agriculture. Prior to CMU, I was a senior embedded software engineer for Amazon Web Services in their AI Devices division working on AWS Panorama. Email / CV / Google Scholar / LinkedIn / Github |

|

|

My research interests lie at the intersection of 3D reconstruction, robotic manipulation, and learning from human demonstration. I wish to enable robots to perform complex tasks in diverse and unstructured environments such as agriculture. I am currently working on cross-embodiment learning from human demonstration utilizing gaussian splatting. |

|

Harry Freeman, Chung Hee Kim, George Kantor In Submission [PDF] [Video] WARPED: A framework that synthesizes realistic wrist-view observations and actions from egocentric human demonstration videos to facilitate the training of visuomotor policies using only a single monocular RGB camera. |

|

|

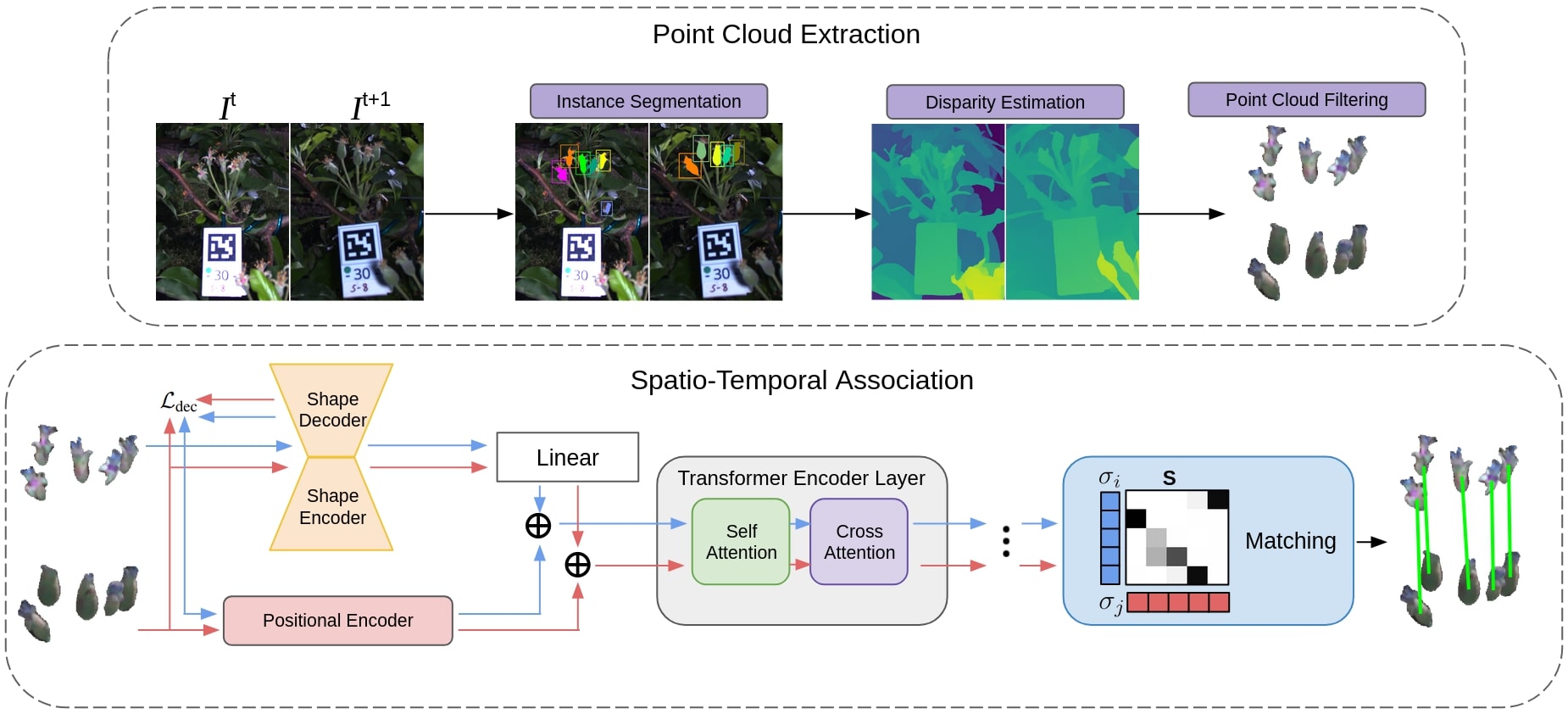

Harry Freeman, George Kantor Accepted to IEEE International Conference on Intelligent Robots and Systems (IROS), 2025 [arXiv] [PDF] [Video] Created a method for temporal apple fruitlet association utilizing stereo images and transformers. Able to achieve F1 matching accuracy of 92.4% on new dataset collected over 3 years of 3 different varietals. We demonstrate that our transformer architecture is generalizable to other datasets and modalities. |

|

Harry Freeman, George Kantor Accepted to International Conference on Robotics and Automation (ICRA), 2024 [arXiv] [PDF] [Video] Developed a novel next-best-view planning approach to enable a 7 DoF robotic arm to autonomously capture images of apple fruitlets. Utilized a coarse and fine dual-map representation along with an attention-guided information gain formulation to determine the next best camera pose. Presented a robust estimation and graph clustering approach to associate fruit detections across images in the presence of wind and sensor error. |

|

|

Harry Freeman, Eric Schneider, Chung Hee Kim, Moonyoung Lee, George Kantor International Conference on Robotics and Automation (ICRA), 2023 [arXiv] [PDF] [Dataset] [Video] We develop a method for creating high-quality 3D models of sorghum panicles to non-destructively estimate seed counts. This is acheived using seeds as semantic 3D landmarks for global registration and a novel density-based clustering approach. Additionally, we present an unsupervised metric to assess point cloud reconstruction quality in the absence of ground truth. |

|

|

Mohamad Qadri, Harry Freeman, Eric Schneider, George Kantor AI for Agriculture and Food Systems (AIAFS, AAAI), 2022 [PDF] [Video] We demonstrate how the use of semantics and environmental priors can help in constructing accurate 3D maps for downstream agricultural tasks with the target application of phenotyping Sorghum. |

|

Cherie Ho, Andrew Jong, Harry Freeman, Rohan Rao, Rogerio Bonatti, Sebastian Scherer International Conference on Intelligent Robots and Systems (IROS), 2021 [arXiv] [PDF] [Video] We build a real-time aerial system for multi-camera control that can reconstruct human motions in natural environments without the use of special-purpose markers. This is acheived with a multi-robot coordination scheme that maintains the optimal flight formation for target reconstruction quality amongst obstacles. |